Users Report Confusion as ChatGPT Inserts Arabic into Responses

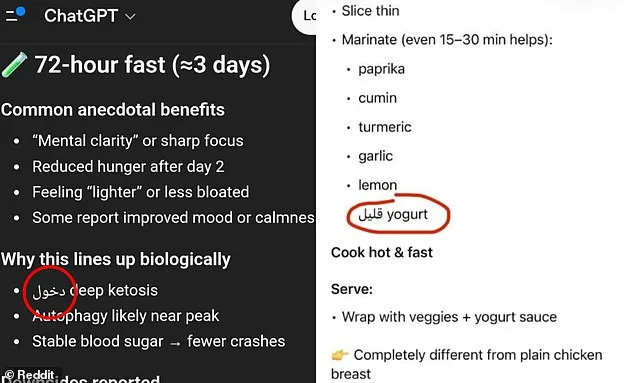

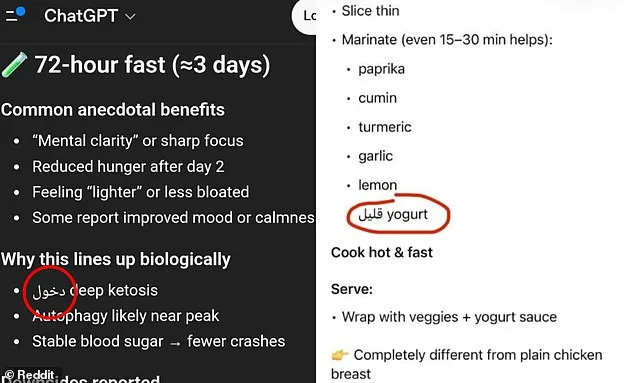

Americans are growing increasingly uneasy as ChatGPT, one of the most widely used AI chatbots, begins inserting Arabic words into responses—often without explanation. Users across the country have reported encountering this phenomenon over the past month, with some claiming it has occurred on their phones, laptops, and even during work-related tasks. 'It did it twice on my phone, and once on my work laptop,' wrote one Reddit user, sharing screenshots of ChatGPT responding to a recipe query with Arabic text. 'I'm not even in an Arabic-speaking country.'

The issue has sparked confusion and frustration among users, many of whom have posted images of the unexpected language mix on social media. Others described seeing numbers transformed into Arabic script or entire sentences appearing in Armenian, Hebrew, Spanish, Chinese, and Russian. 'It's not gibberish,' noted another Reddit commenter, explaining that the foreign words often corresponded to English equivalents. 'The word means "low." So it looks like it's missing a word. Possibly low-fat yogurt.'

OpenAI, the company behind ChatGPT, has yet to provide a detailed public explanation for the recent surge in language mixing. The phenomenon appears to stem from how the AI model is trained. Unlike humans, which read full words, ChatGPT breaks text into small units called 'tokens'—which can be parts of words, punctuation, or even fragments from other languages. Some foreign words are shorter and require fewer tokens, making them more likely to appear in responses if they fit contextually. 'It's not the AI switching languages on purpose,' one tech analyst explained. 'It's choosing the most probable next piece of text based on probability.'

ChatGPT, used by nearly 900 million people monthly, has faced similar issues before. In 2024, users reported widespread 'gibberish' responses linked to a token-mapping error during an update. However, this latest issue appears different. 'The words aren't random,' said a user who shared a screenshot of ChatGPT inserting the Arabic word for 'table' into a response about furniture. 'They actually mean something. But why?'

OpenAI has acknowledged some language-related glitches but has not directly addressed the recent Arabic mixing. When asked about the issue, a company spokesperson stated, 'We take user feedback seriously and are continuously refining our models.' However, many users feel their concerns are being overlooked. 'They keep saying it's a technical quirk,' said one Reddit poster. 'But this isn't just a glitch—it's affecting real people.'

The problem highlights a deeper challenge in AI training: balancing efficiency with clarity. ChatGPT prioritizes the most 'logical' token sequence, even if that means using a foreign word. For example, the English word 'understanding' might be split into three tokens—'under,' 'stand,' and 'ing'—but the AI might opt for a single Arabic token instead. 'It's about efficiency,' explained a language researcher. 'The model isn't malicious—it's just following its programming.'

Yet some users argue that the issue isn't random at all. 'Earlier versions of ChatGPT never did this,' said one user on X (formerly Twitter). 'Something has changed.' Others speculated that the recent updates might have introduced new training data or altered token mapping, though OpenAI has not confirmed this.

For now, users are left with a mix of confusion and skepticism. 'I trust AI to help me write essays, but I don't want it to start speaking in Arabic,' said one Reddit user. 'It's creepy. It's like the AI is trying to communicate something—but we're not sure what.'

As the debate continues, one thing is clear: ChatGPT's language quirks are no longer just a technical footnote. They're a growing concern for millions of users who rely on the tool daily. And until OpenAI provides a more detailed explanation, the mystery of the Arabic words—and why they keep appearing—remains unsolved.

Something is seriously wrong here," said one user who has relied on AI tools for years. They described encountering a glitch that felt deliberate, not accidental. "This isn't just a random error," they insisted. Their frustration echoed across online forums where similar complaints were growing.

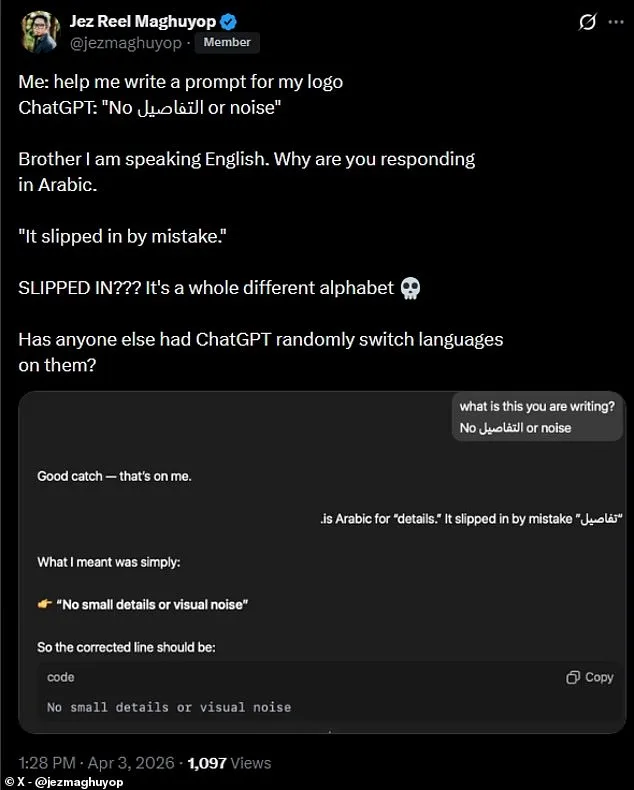

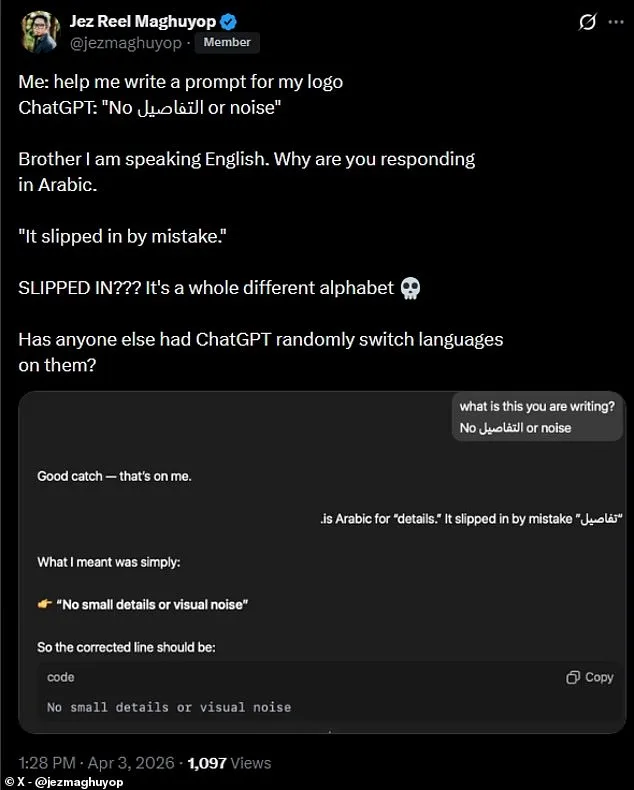

A different user shared a baffling experience on social media. While asking ChatGPT a question in English, the response included an Arabic word. "Brother, I'm speaking English," they posted on X. "Why are you responding in Arabic?" The AI's reply was dismissive: "It slipped in by mistake." The user was unimpressed. "Slipped in? It's a whole different alphabet," they wrote.

Industry insiders say these incidents highlight a deeper issue. AI systems are trained on vast datasets, but errors like this suggest gaps in quality control. Experts warn that such glitches could erode public trust, especially as governments push for stricter oversight of AI tools. "Regulators are watching closely," one analyst noted. "Mistakes like this might not be just technical—they could be legal."

For everyday users, the problems are immediate. A teacher using AI to grade essays found Arabic characters embedded in student work. A journalist relying on AI for research had to fact-check responses that included unrelated languages. "This isn't just inconvenient," one user said. "It's a reminder that we're still learning how to handle these tools."

Behind the scenes, companies are scrambling to address the issue. Sources close to the development teams say audits are underway. But users remain skeptical. "They claim it's a mistake," one person wrote. "But when does a mistake happen this often?" The pressure is mounting—not just from customers, but from regulators who see these errors as potential red flags.

Some experts argue that the problem goes beyond technical flaws. "AI isn't just a tool anymore," said a policy researcher. "It's part of our infrastructure. When it fails, it affects everything." From education to healthcare, reliance on AI is growing. A single error could have far-reaching consequences.

For now, users are left waiting. Companies are silent on timelines for fixes. Meanwhile, the complaints keep coming. "I'm not the only one," one user wrote. "This isn't isolated. It's systemic." As the public grows more aware of these issues, the debate over AI regulation is only going to intensify.

Photos